The World Model Race Is Heating Up - And It Could Reshape Everything

Runway, World Labs, DeepMind, and AMI Labs are betting billions on world models—AI that understands physics, space, and causality. Here's why it matters beyond the chatbot headlines.

Something quietly significant happened in the AI industry this month, and it barely made a ripple compared to the usual chatbot headlines. Runway - the AI video startup best known for letting filmmakers generate cinematic clips from text prompts - raised $315 million at a $5.3 billion valuation. That funding news is interesting enough. But buried inside the announcement was the real story: Runway is no longer just a video company. It is now, first and foremost, a world model company.

And it is not alone.

Large language models - GPT, Claude, Gemini - are extraordinary at processing and generating text. But they have a fundamental limitation: they reason about the world through language, not through an actual understanding of how the physical world works. They can describe a ball rolling off a table. They cannot truly predict where it will land.

World models are different. They build internal representations of environments - spatial, physical, causal - and use those representations to simulate, predict, and plan. They understand that objects have mass, that gravity pulls things down, that cause precedes effect. This is the kind of reasoning that makes autonomous robots practical, that powers convincing simulations, that could eventually underpin AI systems capable of genuine physical-world planning.

The gap between LLMs and world models is, in many ways, the gap between a very well-read person who has never left the library and someone who has actually lived in the world.

Three serious contenders are now in the open:

Runway pivoted its $315 million Series E explicitly toward pre-training "the next generation of world models." CEO Cristobal Valenzuela has said the technology will tackle challenges in medicine, climate, energy, and robotics - a sharp departure from its roots in entertainment. The company released its first world model in December 2025 and views it as central to everything going forward.

World Labs, founded by Fei-Fei Li - the Stanford computer scientist who gave us ImageNet and helped spark the deep learning revolution - just raised $1 billion to advance what it calls "spatial intelligence." Its Marble model creates interactive 3D worlds from image or text prompts. AMD, Nvidia, Autodesk (a $200M check), Fidelity, and others backed the round, pointing to serious cross-industry demand.

Google DeepMind released Project Genie, a world generator, making its Genie family of models publicly available. DeepMind has the research depth and compute resources to play a long game here.

Yann LeCun - who spent years as Meta's chief AI scientist arguing that world models, not LLMs, are the path to genuine intelligence - left Meta to found AMI Labs, targeting a $3.5 billion valuation. His bet is that the field is finally ready to prove him right.

For years, world models were a theoretical aspiration. The compute requirements were too steep, the training data too hard to curate, the benchmarks too immature. What has changed?

Three things have converged. First, compute is finally cheap and available enough - companies like CoreWeave are expanding capacity rapidly, and Runway just signed a deal with them for exactly this reason. Second, video generation models have served as a stepping stone: to generate physically plausible video, you must implicitly learn something about how the world works. Runway's video models essentially pre-trained its world model intuitions. Third, demand has crystallized. Robotics companies, game studios, defense contractors, medical simulation firms - all of them need something closer to physical-world reasoning than text generation can provide.

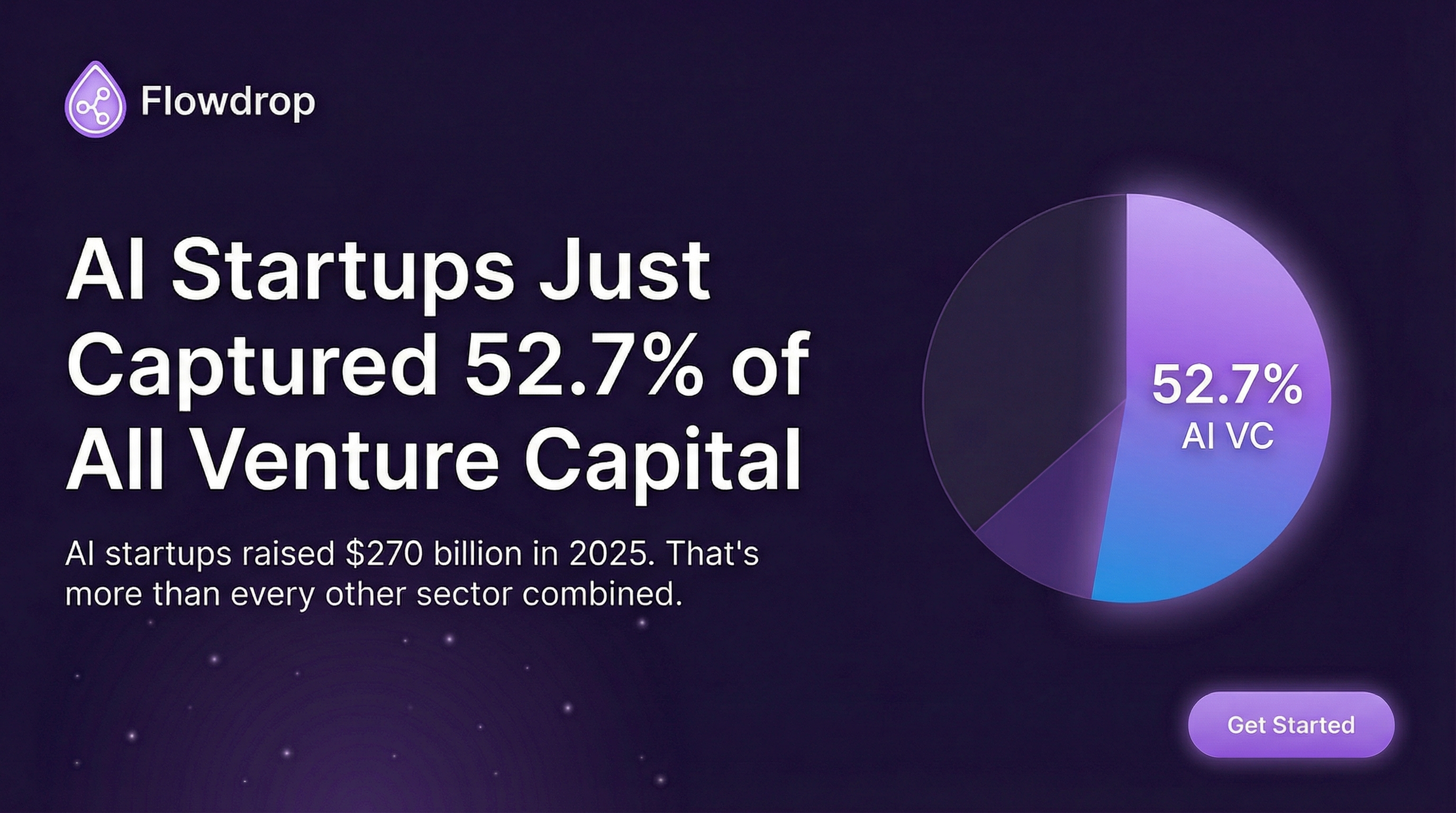

The $76 billion that U.S. AI startups raised through mega-rounds in 2025 was largely concentrated in LLM and application-layer companies. The first months of 2026 suggest world models are becoming the next destination for serious capital.

If you are building products today, world models are not yet a practical tool. The current generation is early-stage and primarily research-grade. But the category matters for a few strategic reasons.

Robotics becomes viable faster. Companies like SkildAI - which just raised $1.4 billion at a $14 billion valuation from SoftBank and Nvidia - are building AI for physical robots. World models are what will make those robots genuinely adaptive rather than scripted. The timeline on warehouse automation, surgical robots, and autonomous vehicles just got meaningfully shorter.

Gaming and simulation are next. Google's Genie already lets users build interactive environments from prompts. World Labs has similar ambitions. The cost of game world generation is about to drop precipitously, which will reshape the economics of the entire industry.

Enterprise use cases are coming sooner than expected. Physical simulation - testing how a building responds to an earthquake, how a drug interacts with a cell, how a supply chain fails under stress - is currently expensive, specialized work. World models will commoditize parts of it.

There is a deeper question underneath the funding headlines. LeCun has argued for years that the path to human-level AI does not run through language but through grounded understanding of the physical world. The LLM camp, represented by OpenAI, Anthropic, and Google's language division, has dominated both the press and the funding for the past several years.

But the world model wave suggests the field is broadening. These are not competing approaches so much as complementary ones - the best systems will likely combine both. But the shift in capital and talent toward spatial intelligence is significant. It signals that the research community increasingly believes the next frontier is not making language models bigger, but making AI systems that actually understand the world they are talking about.

Fei-Fei Li has spent her career arguing that vision and physical grounding are essential to intelligence. With $1 billion in fresh capital and some of the world's leading chip companies backing her, the market has finally caught up to her thesis.

The world model race is not a footnote to the AI boom - it may be the next main chapter. Runway, World Labs, DeepMind, and a growing cohort of startups are betting that the next breakthrough in AI comes not from more tokens, but from systems that understand physics, space, and causality. For anyone building AI-adjacent products, this is worth watching closely. The applications - in robotics, simulation, gaming, and enterprise software - are going to arrive faster than most people expect.

Sources: TechCrunch, Reuters, SiliconANGLE

Related reading:

- The $380 Billion Question: What Anthropic's Record Raise Reveals About the AI Endgame — Where the other half of AI capital is going

- Ex-OpenAI Researchers Raise $150M to Fix AI's Biggest Problem — Interpretability and the next frontier

- AI Agents Replacing Manual Workflows in 2026 — How AI is changing work on the ground

Frequently Asked Questions

About Flowdrop Team

We build AI workflow automation tools for non-coders. Our mission is to make automation accessible to everyone, so you can focus on work that actually matters.